My colleagues and I love watching football, so I figured that quoting a former NFL player should be a good way to start a blog post:

If you align expectations with reality, you will never be disappointed.

Terrell Owens

I am pretty sure Terrell Owens wasn’t exactly thinking about media streaming when he said that. However, if we interpret the quote a bit differently and align our expectations (optimal media playback) with the reality on media segments, we will also not be disappointed.

What happens in our MPD?

When it comes to media streaming, manifest files are a crucial component of the streaming chain. In a manifest file, the player finds all necessary information on media timing, available media qualities and location of the media segments. In MPEG-DASH, manifest files have an XML structure and are called Media Presentation Description (MPD).

MPD example – audio and video segments are not aligned

When looking into a live DASH MPD, you might have stumbled onto something like the following:

<AdaptationSet contentType="video"

<SegmentTemplate timescale="90000">

<SegmentTimeline>

<S d="180000" r="149" t="143220940740000" />

</SegmentTimeline>

</SegmentTemplate>

</AdaptationSet>

<AdaptationSet contentType="audio">

<SegmentTemplate timescale="48000">

<SegmentTimeline>

<S d="96256" r="2" t="76384501728256" />

<S d="95232" />

<S d="96256" r="2" />

<S d="95232" />

<S d="96256" r="2" />

<S d="95232" />

.... lots of lines later

<S d="96256" r="2" />

<S d="95232" />

</SegmentTimeline>

</SegmentTemplate>

</AdaptationSet>In this case, the packager created an MPD file using the SegmentTimeline element.

A detailed look into audio and video adaptation sets

The video adaptation set looks very convenient and the segments have a consistent duration of 2 seconds (180000/90000). The packager makes use of the @r attribute to signal a repetition in video segment duration. Consequently, only one line is required to list all available and upcoming video segments.

If we look at the audio adaptation set, we immediately notice a major difference. Instead of having a single <S> element like in the video adaptation set, the audio adaptation set requires multiple <S> elements. In other words, although the combined duration of all audio segments is similar to the combined duration of all video segments, the number of required entries in the DASH MPD is significantly higher for audio.

Additionally, instead of having a consistent segment duration of 2 seconds, audio segment durations vary in a repeating pattern:

<S d="96256" r="2" />

<S d="95232" />The first three segments in each iteration of the pattern has a duration of 2.0053 seconds while the last segment has a duration of 1.984 seconds.

The consequences of unaligned media segments

Before we examine the cause of the inconsistent audio segment durations, we discuss the consequences:

Oversized manifest files

A major downside of manifest files with SegmentTimeline elements and unaligned media segments is the size of the manifest file itself.

Let’s assume that we have a DVR window of two hours. Consequently, we need two hours of segment information in our manifest. In our example, the audio segments have a duration of approximately 2 seconds. Hence, a single instance of the pattern depicted in Listing 2 (two lines in the MPD) adds up to 8 seconds of audio duration. This leaves us with the following equation to calculate the number of lines required in the MPD:

Number of lines = (7200 / 8) * 2

Number of lines = 18001800 lines in the MPD, just for defining the audio segments. If the manifest is refreshed every two seconds, a large manifest causes a significant amount of traffic on the CDN.

Player performance considerations

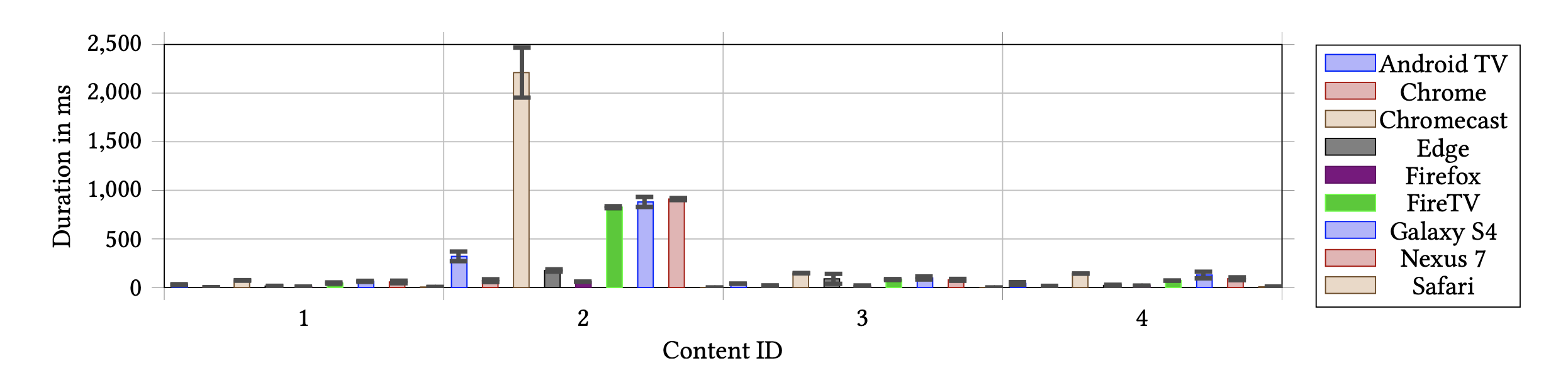

Another important aspect, which is often overlooked, is the parsing overhead on the client. In our paper, “Performance considerations of HTML5-based dynamic packaging for media streaming”, we examined the parsing duration for manifest files with different sizes on multiple devices. An example is depicted in the Figure below:

The content with ID number 2 had a manifest size of 192 kbyte. A Chromecast version 1 requires over 2 seconds for parsing and converting the manifest to a JSON representation. Worst case scenario, the parsing time will be higher than the manifest refresh period, which will ultimately result in playback issues.

Further drawbacks

There are further drawbacks of unaligned media segments. It is much easier to trim content and insert advertisements if the segments are aligned. A specific splice point can easily be mapped to segment boundaries. Moreover, from a player’s perspective, a playback seek is more accurate and easier to implement.

What causes segment misalignments?

Now that we know why it is important to align audio and video segments, we can further explore what causes the misalignments.

Going back to our initial example: why not simply package audio segments with an exact duration of 2 seconds like we did for video?

Audio sampling rate and audio packet size

In the Advanced Audio Codec, the number of audio frames per packet size is fixed to 1024. Our example stream has an audio sampling rate of 48000 Hz.

Now, if we want to create 2 second audio segments, we need to wrap 96000 audio frames into packets with a size of 1024 frames:

96000 / 1024 = 93.75This does not add up, we can not create 3/4 of a packet. Let’s try again with the segment durations we identified in our example: 2.0053 and 1.984, respectively:

(2.00533333 * 48000) / 1024 = 94

(1.984 * 48000) / 1024 = 93This worked out perfectly, where in both cases, we achieved a fixed number of packets.

Recap

Ok, let’s do a quick summary before we do some more math. By now, we know that non-aligned media segments can lead to large manifest files. We definitely want to avoid that in order to save CDN costs and avoid client playback problems.

In addition, we now know that our packager is not broken. There was a reason for the audio segment durations to be slightly lower and higher than two seconds. The sampling rate and fixed number of audio frames per packet size does not allow us to create audio segments with a duration of two seconds.

Finally: how to align our media segments

So what can we do to align our media segments? We simply have to choose a different segment duration!

Let’s enhance our example with some more values. We assume our video has 25 frames per second (FPS). Essentially, what we want to achieve is to have the duration of all video frames of a segment to be equal to the duration of all audio frames of a segment. This leads us to the following formula:

x * 1/25 = y * (1024/48000)

... do some magic

x/y = 8/15This means that we can achieve the same duration of 0.32 seconds for every eighth video frame and fifteenth audio frame:

8/25 = (15*1024) / 48000 = 0.32 secondsThat’s it! The minimal segment duration we need in order to align audio and video segments is 0.32 seconds. Now we can multiply 0.32 with positive integer values to increase the segment duration.

Typical audio and video segment durations

The tables below shows some typical values in order to create aligned audio and video segments for 25fps and 30fps with 48000 Hz each.

| Segment duration in sec | Video Frames | Audio Frames |

| 1.92 | 48 | 90 |

| 3.84 | 96 | 180 |

| 6.4 | 160 | 300 |

| Segment duration in sec | Video Frames | Audio Frames |

| 1.60 | 48 | 75 |

| 4.80 | 144 | 225 |

| 6.4 | 192 | 300 |

Conclusion

In this blog post, we’ve identified the downsides of misaligned media segments. The resulting large manifest files can lead to increased CDN costs and performance problems on the client side.

By choosing segment durations for which the duration of all video frames is equal to the duration of all audio frames, misalignments can be resolved.

In one of our next blog posts, we will illustrate how to achieve segment alignment when encoding and packaging with Elemental Live and Elemental Delta.

If you have any additional question regarding our DASH activities or dash.js in particular, feel free to check out our website.

Andreas Backlund says:

Hello!

Good article. Do you know if the same thing can be implemented for AC-3 or perhaps a combination of AAC and AC-3 in the same MPD. Perhaps this is not possible…

I have used this webpage to simplify the calculations of GOP sizes to use: http://anton.lindstrom.io/gop-size-calculator/

Regards,

Andreas