Affective computing, also known as Emotion AI, is an area of artificial intelligence that focuses on developing systems and technologies capable of recognizing, interpreting, and responding to human emotions (read more in our Affective Computing 101). In this overview article, we take a step back and look at emotions as the field’s core subject: What exactly are emotions, why do we have them, and is it possible to measure them? (Spoiler: Yes, of course it is.)

Emotion is …

Do you remember the Love is … comic strips? Each strip contains the caption “Love is …” and a thought completing it. Now try doing that with Emotion. Depending on whom you ask, you will learn aspects of what emotions are but, as surprising as it sounds, even scientists struggle finding a universal, “one size fits all” definition of emotion.

Everyone knows what an emotion is, until asked to give a definition. Then, it seems, no one knows.

Fehr, Beverly & Russell, James A. (1984). Concept of emotion viewed from a prototype perspective. Journal of Experimental Psychology: General, 113(3), 464–486.

Emotions are a complex phenomenon, involving psychological, physiological, behavioral, cognitive, social, and cultural aspects. That’s why it’s so challenging to find a universal definition for all scientific fields and research interests. We don’t want to take a deep dive into the brain at this point, however, and into the neuroscience of which brain regions are associated with individual emotions. Our main interest is the question of how emotions manifest and how we (humans and machines) register them.

Mixed emotions

Emotions are, for the most part, tied to specific objects or events and, thus, short-lived. They can be triggered through social interactions, external events and stimuli, personal experiences and memories, or hormonal changes. Affective states, on the other hand, refer to the broader spectrum of feelings and moods that can be more enduring than specific emotions. Simply put, they are psychosomatic events with communicative, motivational, and cognitive consequences, influencing a person’s overall emotional landscape.

When we perceive somebody else’s emotions, this happens consciously and unconsciously based on various cues. In general, emotions surface through

- physiological reactions (e.g., an increase in heart rate, sweating, or blushing and paling due to the dilation or constriction of vessels, respectively)

- behavior (changes in facial expressions, gestures, posture, or voice pitch),

- and a subjective, more or less conscious component: “feelings”.

In scientific settings, we can measure emotions by analyzing cues through multimodal data acquisition and AI-based analysis. This, however, does not mean that a machine can “read” human subjects like some dystopian scenarios might suggest. Instead, emotion analysis is the result of pattern recognition techniques that identify and classify physiological and behavorial changes linked to various emotions, followed by training algorithms on this data. In the next step, these trained algorithms can detect similar patterns in new data.

Despite these advancements, detecting emotions remains challenging and somewhat unreliable, which is why we prioritize the analysis of affective states (learn on more on data acquisition modalities). The detection of affective states is based on said psycho-physiological signals, enabling us to reliably identify stress phases and cognitive overload, for example.

Emotion classification

Experiencing emotions is subjective and deeply personal by nature. So how is it possible to measure the unique feeling and expression of happiness, how do you quantify sadness? When we attempt to detect emotions and affective states, we need to apply an objective classification system, thus reducing the complexity of the phenomenon.

Measure what is measurable and make measurable what is not.

(ascribed to Galileo Galilei)

A crucial step in making things measurable is to define a methodology of the measuring process: Which aspects can be measured? How do we quantify and qualify them? In case of emotions, this means agreeing on which aspects can be measured (i.e., physical and behavioral reactions) and to define criteria and labels to categorize them.

Boiling down the research discussion quite a bit, we can differentiate two approaches to classify emotions and to describe their structure:

- Emotions are discrete categories.

- Emotions can be described in terms of dimensional models.

Discrete emotion theory distinguishes a small number of basic emotions, for example (and according to American psychologist Paul Ekman’s theory) happy, angry, sad, surprised, frightened, and disgusted. In reality, however, humans use and interpret a lot more than these six basic emotions – a fact recognized by compound emotion categories. Compound emotions are formed through combinations of basic emotions, for example when having a happily surprised vs. an angrily surprised look on your face. In this approach, happily surprised vs. angrily surprised constitute two distinct categories (out of 21 defined compound emotion categories all in all).

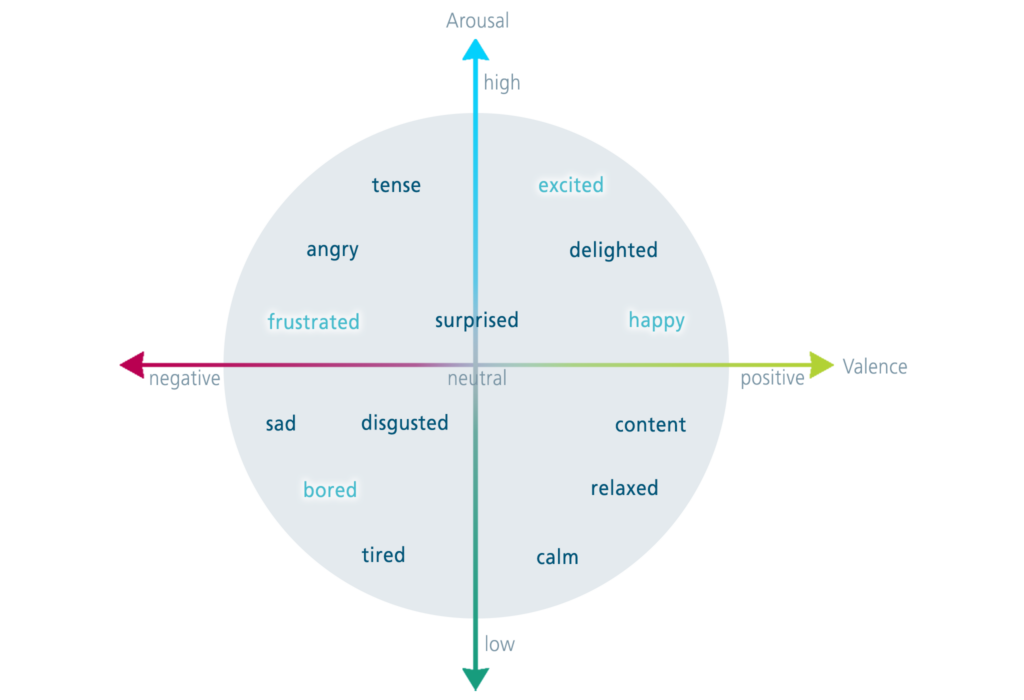

When categorizing emotions in terms of a two-dimensional model, we label whether the predominant emotion is more positive or negative (valence dimension) and the level of arousal (high vs. low). Both dimensions can be incorporated in a circumplex model of emotion, with valence and arousal as the x and y axes and the emotional states positioned at any level of these two dimensions.

Making emotions measurable

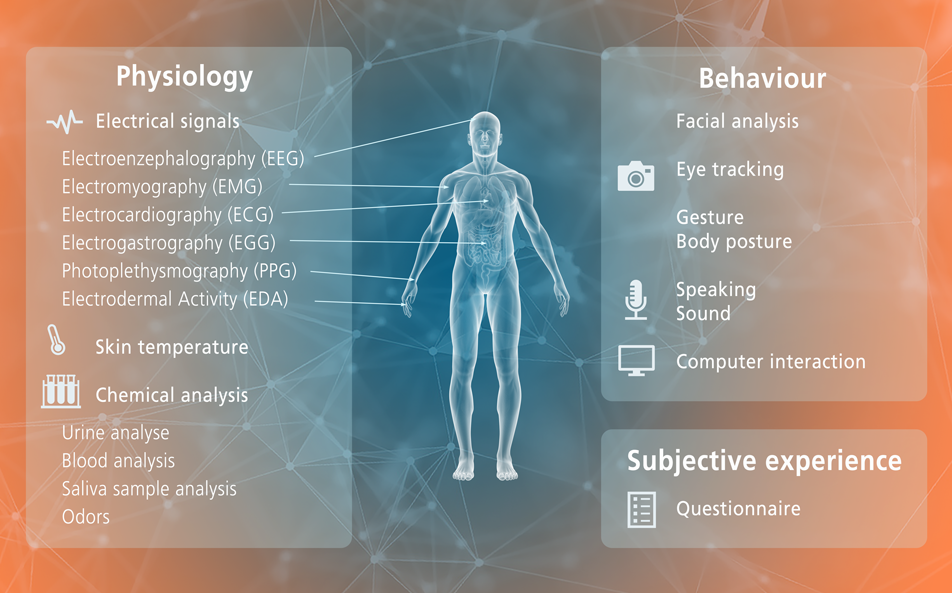

As emotions manifest in recognizable and stereotyped behavioral patterns, we have a prerequisite for standardized data acquisition and analysis methods. Because of the complex nature of emotions and affective states, we depend on multimodal data acquisition and measuring tools to capture the individual components:

- measuring physiological components through electrical signals (e.g., EEG or ECG), skin temperature, or chemical analysis (of urine, blood, saliva, or odor),

- measuring behavioral components through facial analysis, eye tracking, or the analysis of gestures, body posture, and how we sound,

- measuring subjective components (“feelings”) through questionnaires.

Emotion measurement methods are based on the above mentioned categorical or dimensional approach. Complex psycho-physiological affective states, for example, are assessed by classifying valence and arousal, i.e., through a dimensional approach. The labelling of discrete emotions, on the other hand, is based on the categorical approach.

What a feeling: Why we have emotions — and what they can be good for

Emotions are not just a vital part of the human experience, they also have important functions: Emotions enhance our attention, they affect our decision-making, they motivate us, and we memorize emotional events and items better than non-emotional ones (ask any mnemonist).

What is intriguing about emotions is that they are not susceptible to our intentions. We even often mispredict how we would feel (or act) in a hypothetical situation, a phenomenon dubbed “failure of affective forecasting”.

One application area for detecting affective states is health and well-being. We develop hardware and software for detecting human states that go beyond mere affect, using psycho-physiological signals. This allows us to detect stress phases or cognitive overload, which can support therapeutic interventions. Additionally, these solutions can assess a driver’s well-being and comfort, detecting signs of discomfort, stress, or cognitive overload – before accidents happen.

In these contexts, affective computing technologies emerge as valuable tools, providing insightful data that enhances applications where affective states play a crucial role. In addition, they can even help build trust into technology.

Copyright: Adobe Stock / Viktoriia M. – stock.adobe.com

Add comment